Human Rights Watch (HRTW) produced a 42-page report in September 2020 that identified the fact that social media platforms were failing to preserve online content that they had removed which they considered to be terrorism-related, violently extremist, or hateful. The report felt that, as a result, those responsible for both the posting and for carrying out the actions being recorded were likely to escape being held to account.

Recent reports by the BBC and other media show that, not only are the failures highlighted in HRW’s 2020 report still being repeated, it is likely that even more material is being lost through the increased use of artificial intelligence algorithms (AIA) to select and remove offensive material without external human oversight.

It is understandable that platforms remove content that could incite violence, public disorder or cause offence and to avoid the legal issues that the uncontrolled displaying of such material could cause.

In May 2022 four high-ranking members of the US Congress wrote to the chief executives of YouTube, TikTok, Twitter, and Meta (formerly Facebook) requesting them to preserve and archive content on their platforms that might be evidence of war crimes in Ukraine. They accepted that blocking this content from the public may sometimes be warranted, but asked that these companies should preserve the material as potential evidence.

YouTube confirmed that it had removed over 15,000 videos related to Ukraine in just 10 days in March 2022. In early April 2022, Facebook blocked hashtags used to comment on and document killings of civilians in the northern Ukrainian town of Bucha. This was as a result of the platform using automatic scanning to take down violent content, much of it resulting from the torrent of disinformation, faked video and hate speech emanating from Russia’s “bot factories.” While this was later rescinded, there is a fear that much vital evidence was lost in the intervening period.

Meta (formerly Facebook) and YouTube told the BBC that they have exemptions for graphic material when it is in the public interest and try to balance their duty to inform its wider audience while protecting users from harmful content.

The BBC quoted the US Ambassador for Global Criminal Justice, Beth Van Schaak, who said: “No-one would deny tech firms' right to police content. I think where the concern happens is when that information suddenly disappears."

HRW on its “Reliefweb” platform says that “while blocking this content from the public may sometimes be warranted, these companies should preserve the material as potential evidence”.

“Permanent deletion by moderators or artificial intelligence-enabled systems can undermine efforts to expose or prosecute serious abuse.”

The platforms say they do. The BBC contacted Ihor Zakharenko, who has been documenting attacks on civilians in Ukraine since the start of the Russian invasion. He provided four sample of footage he had taken of the murder of civilians last year, which the BBC tried to upload to Instagram and YouTube using dummy accounts. They were all deleted, three within a minute of posting and all of them within ten minutes.

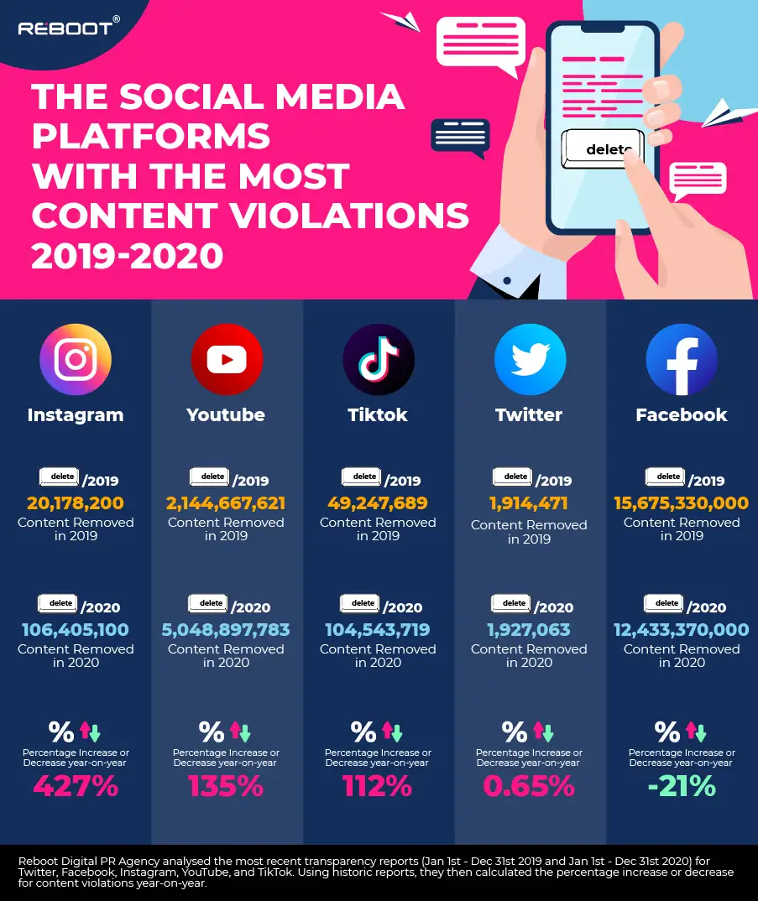

“Reeboot”, an agency that provides advice to businesses on the use of the internet and social media, carried out a study in 2021 that showed how there had been an increase in the removal of content by the main social media platforms over the previous year. This is summarised in the graphic below:

Social media companies say that they have been placed under tremendous regulatory pressure to combat disinformation and fake news for several years and believe that some of this is whatever governments and regulatory bodies say is fake with or without evidence of it being so.

HRW has been advocating for the creation of a centralized “digital locker,” where the social media companies could store deleted content originating from war zones for assessment by third part investigators. The companies resist this, probably because they don’t want outsiders to access their methodologies and policies but turn the demands back onto the NGOs themselves.

A YouTube spokesman said: “Human rights organisations; activists, human rights defenders, researchers, citizen journalists and others documenting human rights abuses or other potential crimes should observe best practices for securing and preserving their content.”

The bottom line is that, in the modern world, social media content is all-pervasive and should be viewed as a fundamental tool for investigating war crimes in Ukraine and elsewhere.

It is time for the social media companies to live up to their obligations and develop appropriate, lawful mechanisms to preserve content that can help bring perpetrators of atrocities to account.

You can also highlight the text and press Ctrl + Enter